The scaffolding behind Accelerate's discovery engine.

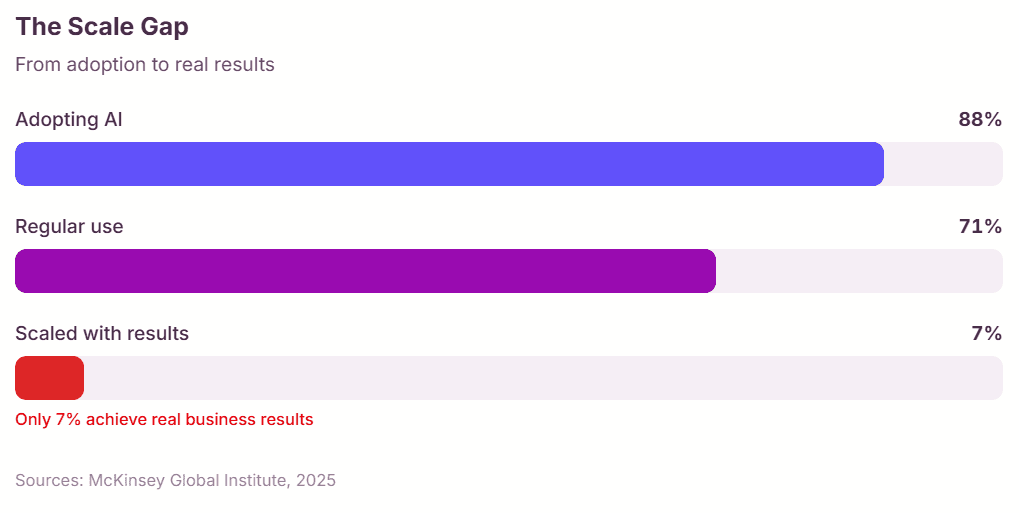

AI initiatives arrive from different corners of an organisation, and pulling them onto common ground always takes work. Skip that coordination and the cost carries straight into the deployment. The Knowledge Bank carries most of that work already.